Scientists are starting to roll out the next generation of earthquake forecasts, based on a smorgasbord of theoretical advances. While California has been using some features of this new approach, Italy is breaking new ground with a system that will issue routine seismic forecasts for the whole country—in technical terms, an operational system. Leaders in this effort explain and defend their approach in two articles in the September issue of the journal Seismological Research Letters (SRL).

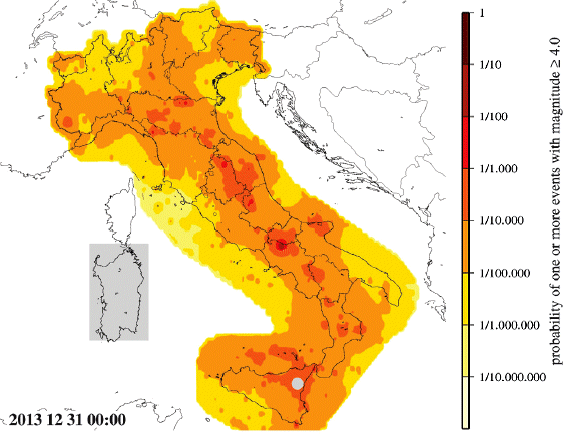

The Italian system, now in beta testing, is described in an SRL article by three scientists from the Seismic Hazard Center of the National Institute of Geophysics and Volcanology. It will create products similar to the map below, showing a forecast for earthquakes of magnitude 4 or greater during the first week of 2014.

We’re used to weather forecasts that give the odds of rain tomorrow. The Italian operational earthquake forecasts will work essentially the same way. The difference with earthquakes is that on any given day—even any given month or year—the odds of one happening are quite small. Seismologists know that, and the public will have to learn that as well. Once they do, they should be less prone to alarmists, cranks and frauds. This will be a good thing.

Let’s take a dramatic example. We all know that big earthquakes have aftershocks. For a few days, earthquakes become hundreds, even thousands of times more likely! But sizeable aftershocks, within one magnitude unit of the mainshock, have odds of roughly 1 percent, and even that’s only in the first two or three days afterward.

That doesn’t sound like much, and it isn’t, but that level of information is still powerful. Consider this: Would people buy a lottery ticket if the state temporarily raised the chance of winning by a hundred times? They probably would, because by analogy that’s what they do when the prizes grow large.