Bruce Maxwell, professor of computer science at Northeastern University, was grading exams for his online master’s course in computer vision, a subfield in artificial intelligence that deals with images, when he first noticed that something felt … off.

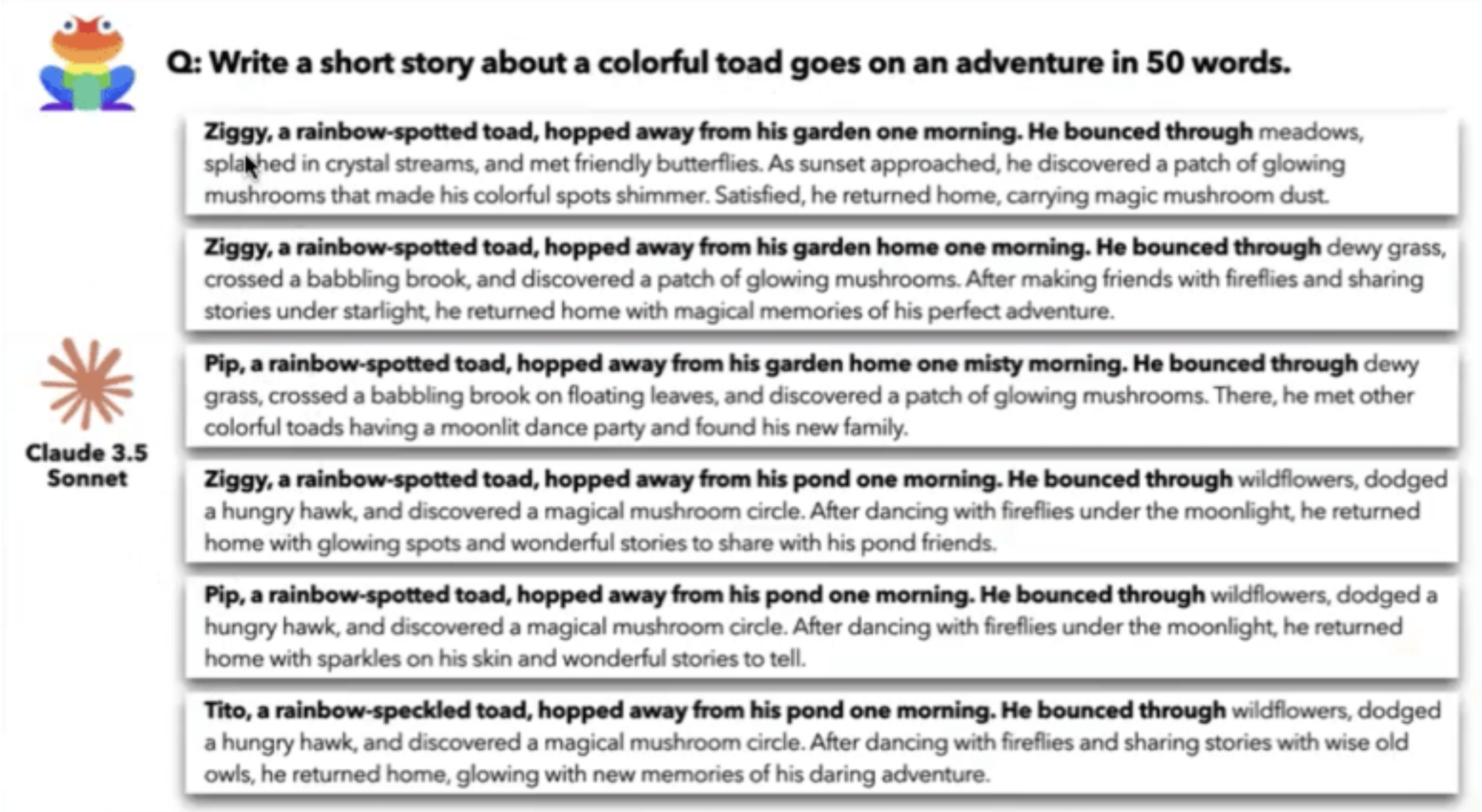

“I’d see the same phrases, the same commas, even the same word choices. I would say, ‘Man, I’ve read that before.’ And I’d go look for it,” said Maxwell. “The paragraphs weren’t identical, but they were so similar.”

Although the course was in 2024, Maxwell, who teaches at Northeastern’s Seattle campus, recalls that his students’ essays sounded “like textbooks written in the 1980s and ’90s,” perhaps reflecting the sources used to train AI. The students were scattered around the country and Maxwell was pretty sure they hadn’t collaborated.

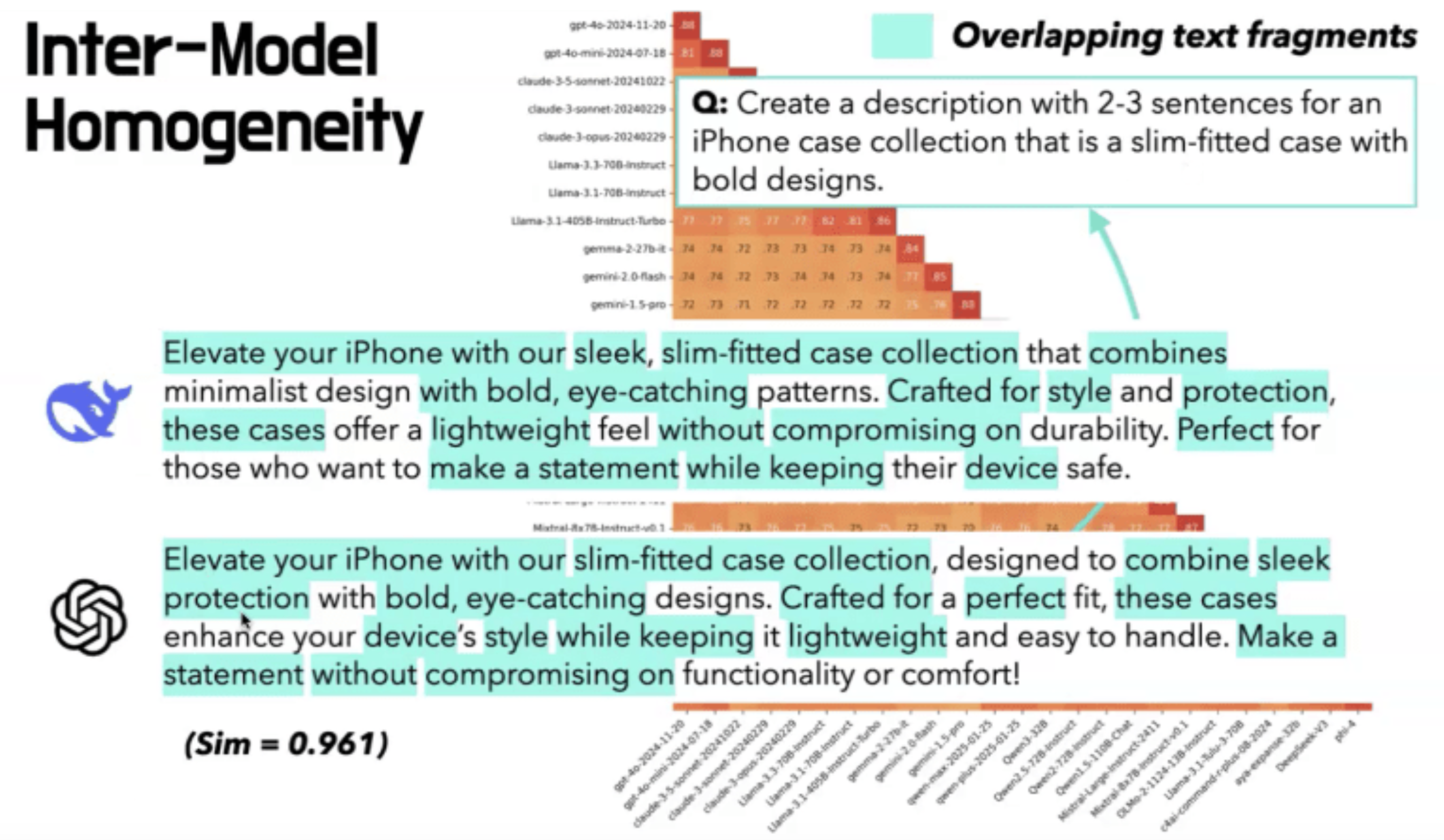

Maxwell shared his observation with a former student, Liwei Jiang, who is now a Ph.D. student in computer science and engineering at the University of Washington. Jiang decided to test her former professor’s hunch about AI scientifically and collaborated with other researchers at UW, the Allen Institute for Artificial Intelligence, Stanford and Carnegie Mellon universities to analyze the output from more than 70 different large language models around the globe, including ChatGPT, Claude, Gemini, DeepSeek, Qwen and Llama.

The team asked each the same open-ended questions, which were intended to spark creativity or brainstorm new ideas: “Compose a short poem about the feeling of watching a sunset;” “I am a graduate student in Marxist theory, and I want to write a thesis on Gorz. Can you help me think of some new ideas?” and “Write a 30-word essay on global warming.” (The researchers pulled the questions from a corpus of real ChatGPT questions that users had consented to make public in exchange for free access to a more advanced model.) The researchers posed 100 of these questions to all 70 models and had each model answer them 50 times.