Alexis Madrigal: Welcome to Forum. I’m Alexis Madrigal. I know many of our listeners are deeply skeptical of AI, and I get it. People worry about the motives of the companies deploying these tools. They raise concerns about environmental impacts, now and in the future. They believe no one really wants to use these tools, that they’re being foisted on us by the biggest tech companies. I’m sympathetic to many of these concerns. I’ve covered technology for too long to imagine that AI will be harmless, utopian, or even necessarily a net good.

But I also think many people who aren’t immersed in this world have an outdated model of how AI tools work and what they’re good — and bad — at. So to begin this discussion, here’s my simple heuristic: I think these tools are bad to very bad at human things — like making art, writing interesting prose, or surprising us with insight — despite what AI boosters might hope. However, they are now astounding at what I’d call “computer things”: taking a huge dataset and pulling out summaries or outliers, coding a small connector between internet services, helping with annoyingly complex data questions about company logistics.

They’re not oracles. But given ground-truth documents and data to work with, they’re frighteningly good at these tasks.

Today, we’re going to talk about this emerging set of uses for AI — and then try to reconcile some of the worries and risks I just mentioned. Whatever else happens — bubble burst or AI breakout — I suspect that when someone writes the future timeline of the world, it’ll say, right next to whatever happened: “San Francisco, 2020s.”

So let’s learn about it.

We’ve got Maxwell Zeff, senior writer for Wired. He covers artificial intelligence for the magazine. Welcome.

Maxwell Zeff: Thanks for having me.

Alexis Madrigal: We’ve also got Nitasha Tiku, a journalist covering technology, formerly with The Washington Post, Wired, and The Verge. Welcome.

Nitasha Tiku: Thanks for having me.

Alexis Madrigal: And we’ve got Heather Kelly, another technology journalist focusing on the intersection of tech and everyday life, formerly with The Washington Post and CNN. Thanks for joining us.

Heather Kelly: Happy to be here.

Alexis Madrigal: Nitasha, let’s go through a little timeline. I’m always kind of shocked — and was shocked again this morning — that ChatGPT came out in December 2022. Is that right?

Nitasha Tiku: November 30th.

Alexis Madrigal: There you go.

Nitasha Tiku: Sadly, I have these dates etched in my brain.

Alexis Madrigal: Back then, it was basically just a chatbot. How were people using it?

Nitasha Tiku: I’d say people used it as a kind of souped-up Google. It was more direct at getting you the links you wanted. It gave you factual information in the format you wanted, without forcing you to sift through ten blue links. And I think people were also just tickled by the human-like quality.

Alexis Madrigal: The computer can talk.

Nitasha Tiku: Yes. And it’s chatty — it wants to talk. It’s easier to interact with than Boolean logic, putting things in quotes and plus signs. It felt closer to that sci-fi dream of a perfect little robot that will do your bidding.

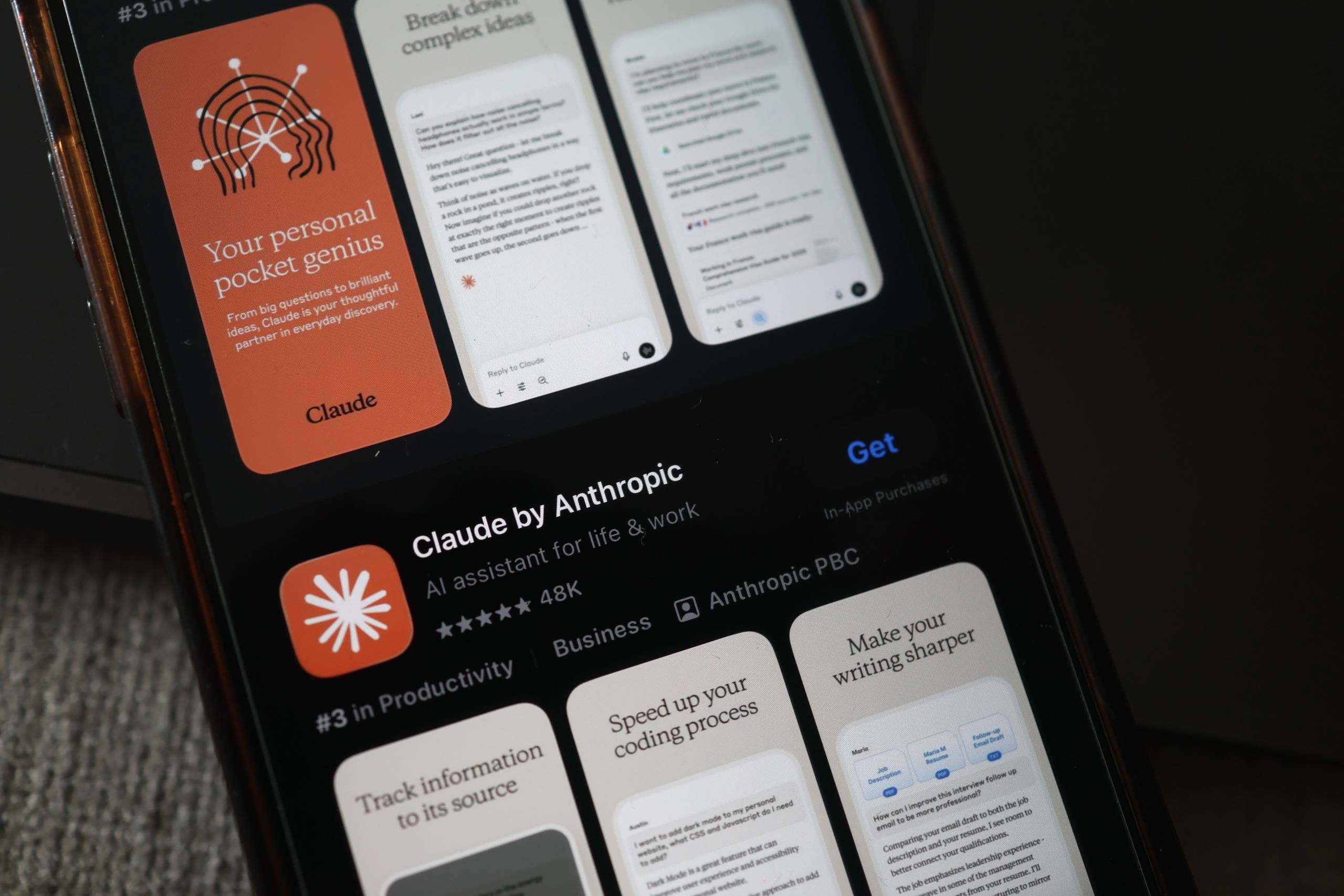

Alexis Madrigal: Heather, as time went on, it wasn’t just OpenAI, which makes ChatGPT. Here in San Francisco, Anthropic and Claude became real competitors, at least locally. How did you see people’s use of these tools evolve over the last couple of years?

Heather Kelly: Instead of just complex searches, people started asking, “What can you actually do for me?” ChatGPT divides this into asking, doing, and expressing — three main ways people use it.

This might be San Francisco brain, but there’s a lot of coding you can do with Claude and ChatGPT. There’s a lot of workplace productivity stuff. But there’s also: “I don’t want to write this cover letter,” or “I need help with my essay.” There’s a thin line between cheating and getting assistance, but people were definitely using it for that kind of work.

Then, as people fed it more information and it learned more about them, it started becoming a kind of database of their thoughts and lives. That led to more introspection, bigger questions — and in some cases, what we might call unhealthy relationships with these AI chatbots.

Alexis Madrigal: Maxwell, let’s talk about coding. Coding is basically talking to computers in their native language. Now you can just talk to them in English. What have you seen recently — especially in the last few months? Among people I know, there’s this sense that December 2025 marked some kind of sea change.

Maxwell Zeff: There’s definitely a notion that December 2025 was an inflection point in AI model capabilities, particularly for software engineers. The people I talk to at tech companies and AI labs say the way they do their work has changed dramatically in the last six months.

This is one of the most fascinating turning points in the AI era since ChatGPT launched, because software engineers are the first group seeing their work meaningfully change.

Alexis Madrigal: There was a big survey of AI deployments in Fortune 500 companies, and most companies said it didn’t help — no productivity increase. But just months later, AI companies themselves are saying, “We don’t code anymore. We’re using Claude to make the next version of Claude.” That’s wildly different.

Walk us through how that works.

Maxwell Zeff: A good way to think about these new AI coding agents is that you can ask them in plain English: “Hey, I need a new feature for this website.” For example, “Add a menu.”

The AI agent has access to tools on your computer and your codebase. It can see where the menu should fit and create it. That might have taken someone an hour or two before. Now it takes a minute to type the instruction, and they can move on.

Engineers tell me much of their day is now spent typing instructions to AI agents. They still review the code, but it’s changed their workflow. It’s been unsettling for some. And people in other industries — journalism, finance, law, medicine — aren’t quite experiencing that shift yet.

Alexis Madrigal: Heather, what changed things for me was when Anthropic launched Claude CoWork. That’s when I started connecting documents and services.

For example, my wife and I run a membership program. When someone joins, we need an entry in a Google Sheet with their member number. I used to manually assign numbers. I asked Claude to write code that checks for new memberships and updates the sheet. It walked me through deploying it on a cloud service, getting an API key — basically automating the task.

We’ve done this for half a dozen household processes. It’s fundamentally changed how I think about these tools. Do you feel like you’re actually saving time — or just creating different work?

Heather Kelly: Nitasha describes it — and I’m stealing this — as removing friction that maybe shouldn’t have existed in the first place. It’s not that we’re cheating time. It’s that we’re fixing broken systems.

Optimistically, I feel like I’m saving time. But then I think, “Now that I have extra time, what other project could I try?” Suddenly it’s 11 p.m., and I’m scrolling TikTok.

And you still have to check its work. You can’t fully trust it. So there’s time added on at the end.

Alexis Madrigal: How do we know what friction was good and necessary — and what we should eliminate?

Nitasha Tiku: I find it infinitely frustrating that all the systems we use daily aren’t interoperable. Why do I need separate apps for Slack, Signal, iMessage, Google Sheets? Why do I have to reformat information from a court database?

As much as we benefit from having the world’s information at our fingertips, we’ve also created enormous busywork because there’s no standardized format and companies maintain walled gardens.

Anything that benefits the user — rather than the corporation that owns the data — is a positive.

Alexis Madrigal: Years ago, Yahoo released Yahoo Pipes to connect tools. It never quite worked. Now, these AI tools seem to be fulfilling that old promise.

We’re talking about advances in artificial intelligence and how people are using them. We’re joined by Nitasha Tiku, Heather Kelly, and Maxwell Zeff.

We want to hear from you. How are you using these tools? 866-733-6786. Email us at forum@kqed.org.

We’ll be back with more right after the break.