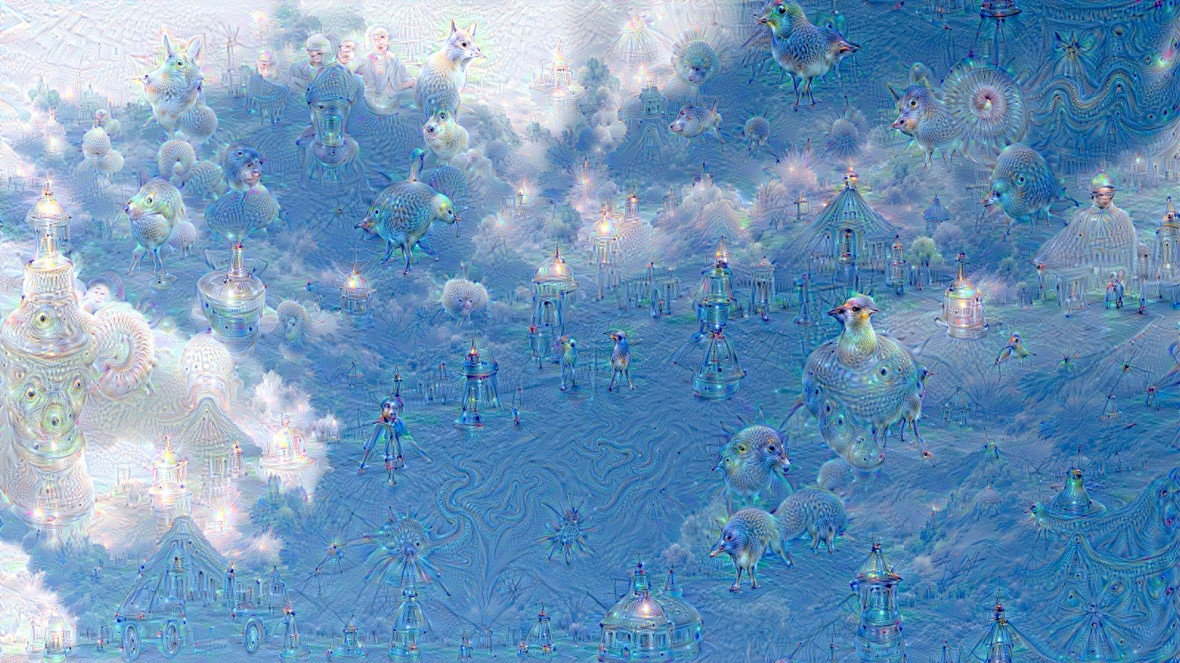

On June 17, software engineers at Google posted images on the company’s research blog that sent the internet into a tizzy. When fed images of pure static, Google computers trained on image recognition output psychedelic bonanzas of swirling color, floating pagodas — and hybrid creatures part fish, part pig and part corgi.

As of today, the original post has 2,324 comments, ranging in mood from the contemplative (“Beyond the eye candy, there is actually something deeply interesting in this line of work”) to the panic-stricken (“Should I be scared?”).

The research team released the code used to create these visualizations in a follow-up post on July 1 so others could play with what they’re calling “DeepDream” — computers dreaming of and rendering all manner of electric sheep.

It wasn’t long before a number of sites popped up to let us non-coders simply upload images and wait for the deeply disturbing output. One of those, Dream Deeply, even lets you choose between “a nap” and “a night’s sleep.” It essentially determines how long an image runs through the system’s feedback loop, becoming more and more divorced from its original content. Now there’s even a Reddit thread related to the project.

So what’s really going on here?